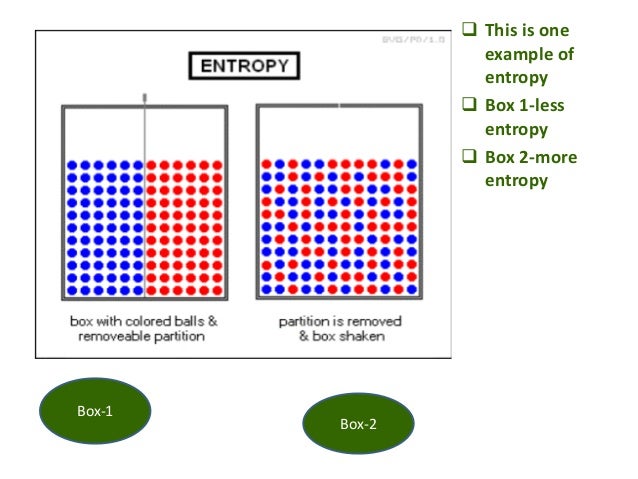

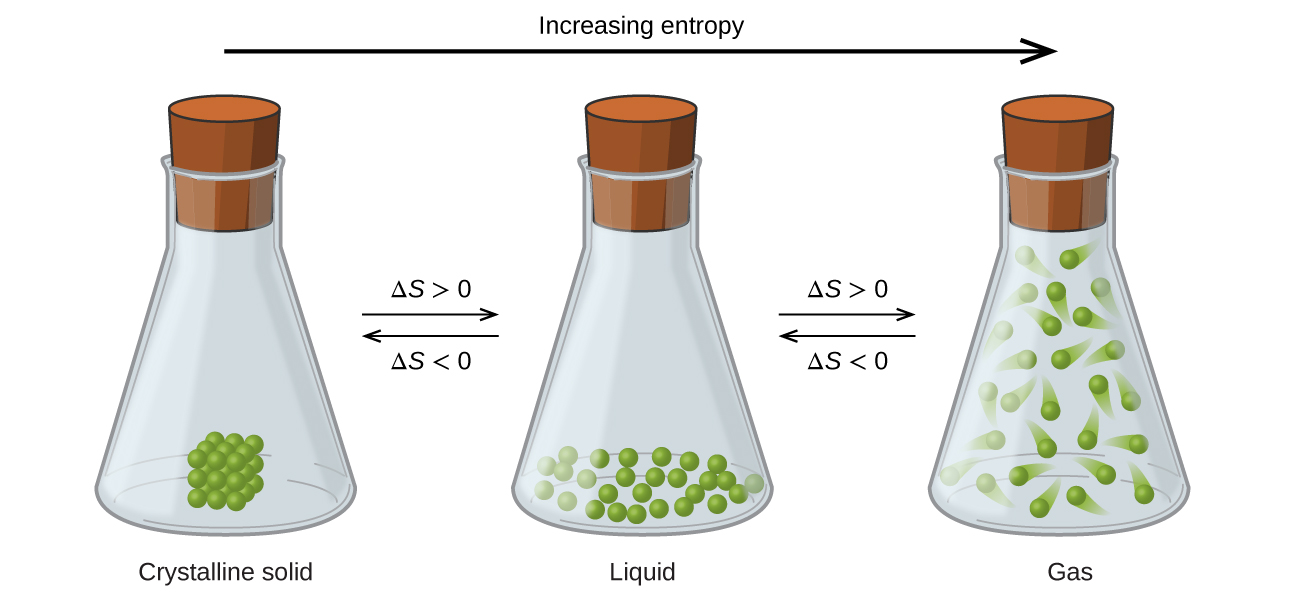

The entropy change is unknown (but likely not zero), because there are equal numbers of molecules on both sides of the equation, and all are gases. atoms) are arranged." Hopefully it is intuitive that there are far fewer ways of arranging atoms symmetrically than not, and therefore that the entropy of systems known to be symmetric is lower than the entropy of systems that are not.\left( g \right)\) In short, it might help if when you hear "organized" you mentally translate that to "has some symmetry restrictions on how the degrees of freedom (e.g. High-entropy alloys (HEA) have a unique chemical composition that makes them strong, ductile, and resistant to wear-and-tear even at high temperatures. So the entropy of the system when it's known to be "organized" is less than when it is known to be not. Because of these restrictions, it is almost always the case that there are fewer states of the system that satisfy the restrictions than that don't. the atoms are distributed uniformly, or in a regular array, the velocities are all the same, and so forth. States we are willing to call "organized" generally have severe restrictions on the degrees of freedom: their values are distributed symmetrically in some way, e.g. On the other hand, if we know the system is in an "unorganized" state there are 125 possible states it could be in, so its entropy is k ln 125, higher. There is only one "organized" state, so if we know the system is in an organized state then its entropy is k ln 1 = 0. So now let us supposed we observe this system, and we have some way of knowing, either from measurement or method of preparation, that it is in an "organized" (symmetric, checkerboard-like) or "unorganized" state. Now which of these states are "organized?" That's to some extent a matter of opinion, but a common definition is "even" or "symmetrical." So, for example, the state where there's an atom on every other site, in a checkerboard pattern, looks "organized" - as if the sites formed a 2D crystal. A priori, there are 9!/(9-5)!4! = 126 ways of putting 5 atoms on 9 sites. It is also widely misrepresented as a measure of disorder, as we discuss below. Melt it, you get more disorder because molecules can now slide past one another. Basically, a solid is pretty ordered, especially if it is crystalline. Let's model the surface as a square 3x3 array of sites and put 5 atoms on it. Entropy is one of the most fundamental concepts of physical science, with far-reaching consequences ranging from cosmology to chemistry. Chemistry Thermochemistry Entropy Key Questions When does entropy increase Entropy increases when a system increases its disorder. Some of those states look "organized" to us, some look "disorganized." For example, consider a model of atoms adsorbed on a crystal surface. Now "organization" has no precise definition, but is rather an descriptive label we might apply to categorize states of the system. If there are 2 states with energy E, then S = k ln 2.

That which is most useful here is from Boltzmann, S(E) = k ln W(E) where S is the entropy, E is the energy of an isolated system (which is the easiest system to consider), k is Boltzmann's constant, and W(E) is the number of states of the system that have energy E.įor example, if the system has exactly one state with energy E, then S = 0. It is important to note that entropy is a state property, meaning the change in the entropy of a system depends only on its current state. Entropy has two general precise definitions. Entropy is a measure of disorder and is particularly relevant to the second law of thermodynamics, which states that the entropy of an isolated system increases in the course of any spontaneous change.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed